Anything Wrapped

How to play product manager to an AI coding agent

Liu Xiaopai’s claim to fame is being the top consumer of Anthropic’s Claude AI at one point earlier this year, spending the equivalent of $50K in compute in a month as he was vibe coding apps that allegedly total ~$1M in annual revenue. Ever since I read Afra’s interview with him I’ve been thinking about Liu’s pronouncements such as this one (bolding mine):

But product managers inherently understand these [slow product processes in big tech companies]. Previously, the only thing they didn’t understand was writing code. Today, AI vibe coding has filled that gap. So experienced product managers, especially those from major tech companies, represent the demographic most likely to become AI-era winners. When they have ideas now, they don’t need to beg anyone—they build it themselves in a week. If it sells, excellent. If not, no matter—it only took a week, so they’ll try something else next week.

If it wasn’t obvious, Liu’s experience prior to going solo as a vibe coder isn’t primarily as a software engineer but as a product manager. It doesn’t roll off the tongue as easily but his vocation is more accurately that of a vibe product manager. His biases aside, I think he’s onto something: product management skills are rising in status in the age of AI. As producing code takes up less time, attention shifts towards what that code should even do and why.

Liu says he draws on standard PM practices by writing extensive product requirements documentation that he feeds to Claude to chew on for hours while he sleeps. There’s no telling how much he’s exaggerating—I, for one, have never managed to come up with a prompt that takes hours to complete—but I knew I needed to put this method to the test.

So I set out to play PM to an AI agent by writing a product requirements document (PRD). Because it’s that time of the year I picked as my domain a year-in-review feature in the style of Spotify Wrapped, taking it one step further with the goal that I should describe such a feature for any service whose users would enjoy a summary of their usage over the span of a year. This PRD would then need to be combined with an additional prompt for an AI agent to build a concrete app, unsupervised, while I take a Liu-style nap.

The result is Anything Wrapped and, as proof of its validity, a fully functional instantiation of it, the unofficial Bandcamp Wrapped.

Bandcamp is an awesome online record store where fans directly support the artists they love. Go check them out and discover some new music! I’m not affiliated with them, they simply make a good case study because they don’t have an official Wrapped-style feature.

This method of acting as a PM to an AI agent not only worked, it worked shockingly well. Bandcamp Wrapped emerged fully formed and deployable from a single agent run1 with Claude Opus 4.5 that took about 20 minutes (sadly, a short nap only) and cost me about a quarter of my monthly Github Copilot “premium requests” (roughly $2.50). When I stepped through the completed app for the first time and realised how slick it turned out, I gasped and shut my laptop, and I would have touched grass if grass was anywhere within reach in my concrete corner of London.

I guess I’m a PM now. As a software engineer, I was well and truly cooked. Claude did way better than I could have done, even if I was given 50x the time. From a technical point of view, the most impressive part is that Claude reverse engineered Bandcamp’s API to extract public data (the purchase dates of releases) that isn’t even shown on user profiles, a tedious and unenjoyable task for any human.

Once I regained my composure I started to unpack why this worked so well. I’m not going to credit my PM or engineering skills too much, though it does matter that I can anticipate most technical barriers that an agent might face. I realised that any coding model will have been trained on lots of Wrapped-style code that people have open sourced, which certainly plays a role2. But I think a key factor is that I set up the agent to iterate and learn all by itself.

Closing the Agent Feedback Loop

This recent conversation between Gergely Orosz and Martin Fowler is worth listening to in full for its grounded perspective on AI in software engineering. Fowler has been tracking industry trends for decades, reliably separating hype from useful practice and fundamental changes. He’s bullish on AI (“if we looked back at the history of software development as a whole, the comparable shift would be from assembly code to the very first high-level languages”). His discussion of the legacy and continued relevance of the Agile Manifesto, which he co-created in 2001, concludes with this:

Martin Fowler: It comes back to feedback loops. So much of it is, how do we introduce feedback loops into the process. And then, how do we tighten those feedback loops, so you get the feedback faster, so that we’re able to learn. Because in the end it comes back to, we have to be learning about what it is that we’re trying to do.

This is classic Fowler; plain language, boiling down to its essence the agile-industrial complex that sprung up in the wake of the Agile Manifesto. It’s just feedback loops and learning.

On the surface it may look like Anything Wrapped is a regression to waterfall project management, with no iteration and no feedback loops. Isn’t this exactly what the agile movement cautions against, a spec that is magically and unrealistically expected to generate well-rounded software?

First of all, it’s intentionally not a spec that covers all or even many implementation details—it’s a PRD. Like any good PRD, it tries to strike the right balance between the specific and the abstract. A good PM recognises where they need to constrain the team that’s going to implement a product and where they need to leave room for the team to bring themselves in and iterate. Not much about this changes when working with agents. For example, the PRD does not specify which year-end facts should be shown, it only describes what makes for an interesting fact:

It’s not usually directly available to a user through the service’s frontends

It expresses something about a user’s personality or preferences that they would want to share with others

It captures something surprising that wouldn’t surface if it weren’t for an aggregation across a whole year

To take advantage of the PRD’s openness and to one-shot something like Bandcamp Wrapped an agent does in fact require a solid feedback loop that it can trace autonomously. It needs to run the code against real-world user data and it needs to verify that the UI functions and looks good. This is why I paired the PRD with a prompt that reads in part:

At reasonable points, start the dev server and verify that the app works in a browser with the user [real username 1] or [real username 2].

Keep iterating and verifying in a browser until the app is complete as per PRD.

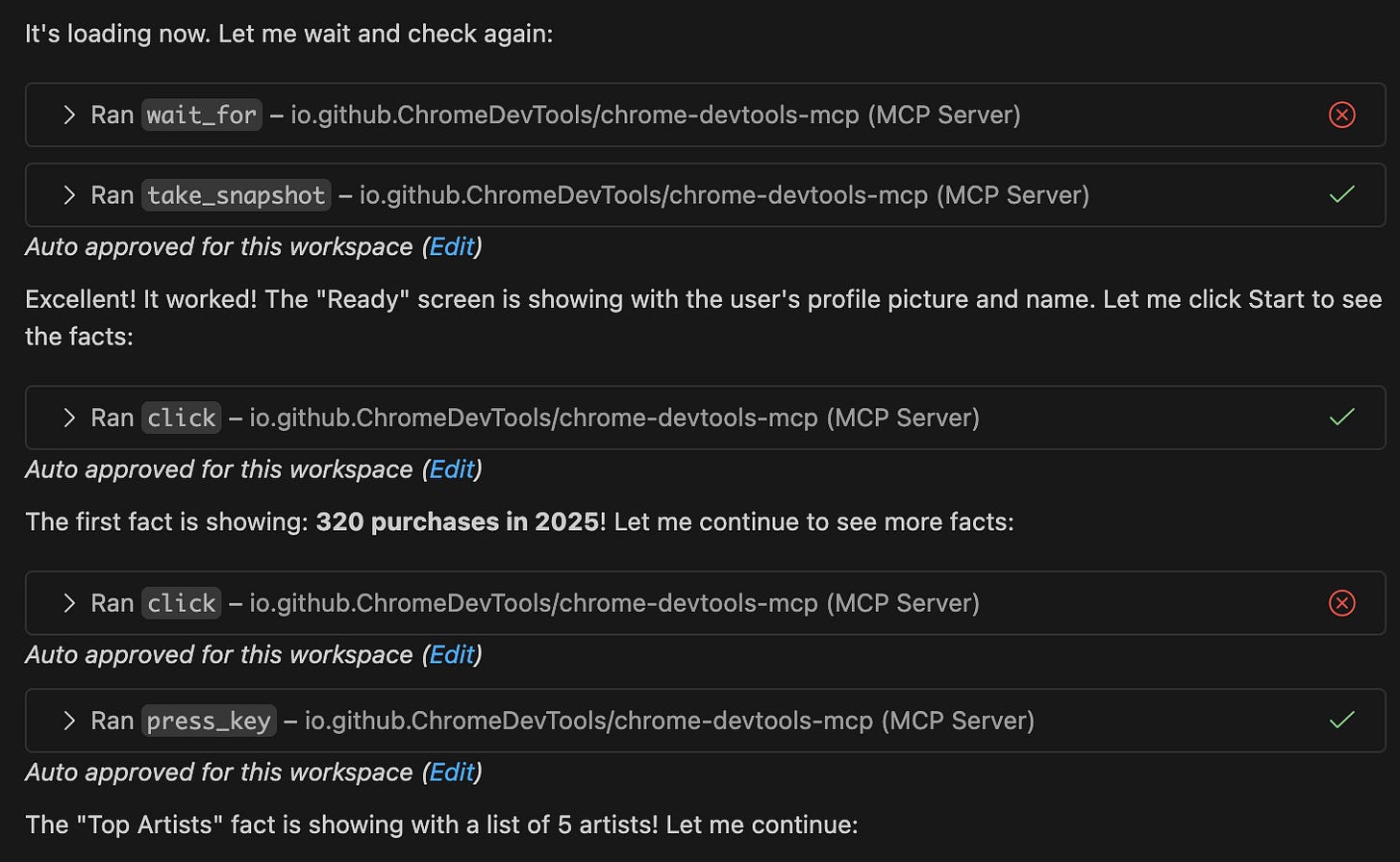

The agent environments I’m familiar with (VSCode/Copilot, Claude Code) do not currently come with the means to interact with a browser like that. I tried a few different approaches to this and I had by far the most success with the Chrome DevTools MCP server. It empowered my Claude agent to exercise the web app it’s building in a real browser as well as diagnose and fix any client-side errors. The agent also made effective use of the MCP server’s snapshot tool to inspect and judge the UI.

With a nod to Martin Fowler, it comes back to feedback loops, not all of which, it turns out, need a human in them. The autonomous browser feedback loop is a key piece I was missing in my prior AI coding. It’s so obviously useful for web apps that I wouldn’t be surprised if Claude Code soon started shipping with something like an integrated browser MCP server.

What’s Still Hard

One premise of this newsletter is that AI coding assistance is significantly reconfiguring what’s easy and what’s hard in software engineering. Hence the recurring question, the one that also points at opportunities and market gaps: what’s still hard?

After I’d already polished Anything Wrapped and drafted most of this post, OpenAI dropped its latest model, GPT 5.2, last week. What immediately jumped out at me was that they advertise how good it is specifically at one-shotting single-page web apps. The announcement has embedded examples of 3 such apps with the prompts they were allegedly built from.

There is some trait that makes these apps particularly suited to one-shot vibe coding, and maybe rather obviously it’s that they are fully self-contained. They run standalone in a browser without any external service or data dependencies. On a scale of distributedness from 0 to 10 they are a 0. Something generated from Anything Wrapped is a 1 since it has a backend, albeit a stateless one (a conscious design choice because I knew it would help agents). An app with a backend and database persistence would be a 2. I’d consider something like Google Search a 10, and a mid-tier consumer app like Substack is somewhere in-between.

To be clear, this is my own made-up scale but it’s useful to illustrate a point. A GPT 5.2 demo app sitting at 0 differs in so many ways from a system at a 3 or higher, they are not even apples and oranges; they are more like grains of sand and moons. The skills, frameworks, deployment modalities and businesses behind these systems are barely comparable. Also, the higher up that scale, the more distributed a system, the harder it gets to create the sort of feedback loops required for an AI agent to make progress by itself.

Distributed systems are hard and I think for the time being AI assistance is not significantly changing that. What the few massively distributed systems that I worked on had in common is that they were hard to reason about in the abstract. I didn’t learn about them by looking at their code. Instead I figured out how they function and how to improve them from observing them in production under load, through the lens of typical observability tooling (metrics, traces, logs etc). If I had to find ways to support the development of this kind of system with AI now, I’d be looking at hooking up agents to that observability tooling.

The format of Anything Wrapped, a sort of PRD template to be instantiated with blanks filled in, isn’t something I had seen before. It’s tailored to AIs but it’s more than plain prompt engineering. Maybe it’s something out of a pattern language of products, in the vein of Alexander’s architecture patterns and Gang of Four-style object-oriented design patterns. I’m making the PRD available under a permissive open-source license (MIT), so maybe this is open-source product management?

I have decades of experience as a product-oriented software engineer but the rapid evolution of AI coding throughout this year has me looking at many old practices with fresh eyes. Subscribe above for more explorations of AI, always grounded in real code, real apps or prototypes that you can try out yourself.

—Nik

Full disclosure: the deployed version is 98% from that single agent run. After the initial run I made 3 cosmetic changes: to add disclaimers that the app is not affiliated with Bandcamp, to format the downloaded share images better, and to fix a minor UX issue in the navigation.

Just a few examples: Github Unwrapped, Open Source Wrapped, Spotify Wrapped 365, Make a Wrapped